Admin

Administrators-

Posts

751 -

Joined

-

Last visited

Reputation

246 ExcellentAbout Admin

- Birthday 06/22/1990

Recent Profile Visitors

13,248 profile views

-

Your account is not affected by this issue and has an active subscription. Can you mail us (support@lowepost.com) with the issue you are experiencing so our support team can look into it?

-

Thank you for reaching out, sometimes when a subscription expired its "stuck" in the system and requires a manual cleanup - we tend to do that manually from time to time. I have cleared it for you.

-

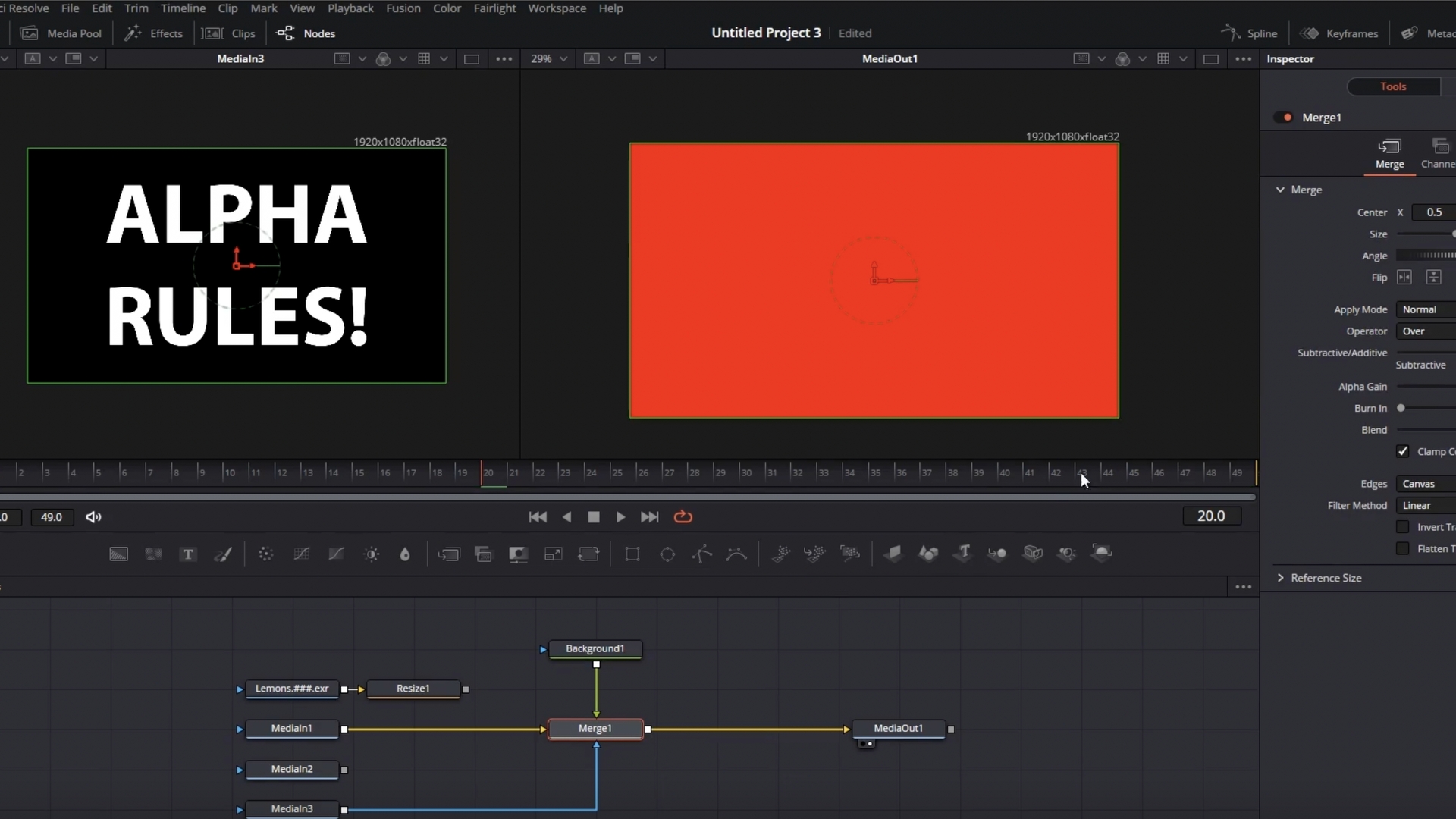

This focused course teaches colorists how to properly prepare, export, and manage VFX shots within a professional color pipeline. You’ll learn how to deliver clean, editable plates to VFX teams and how to receive and reintegrate VFX renders with precision. The lessons cover technical essentials every colorist needs to avoid pipeline mistakes that can cost time, money, and image quality. Many colorists overlook VFX workflow details, but incorrect exports, faulty alphas, or poor matte handling can lead to broken composites, mismatched grades, or delays in post-production. Understanding how to prep and render alpha-based footage ensures seamless collaboration with VFX artists, protects your grade, and elevates the overall project quality. The footage used in this course is available for download. Download project files About the instructor Lee Lanier has created visual effects on numerous features films for Walt Disney Studios and PDI/DreamWorks. Lee is a world-renowned expert in the video effects field, and has written several popular high-end software books, and taught at the Gnomon School of Visual Effects in Hollywood. Who is this course designed for? Post Production professionals Colorists DaVinci Resolve users Finishing artists

-

Course description Learn proven strategies and take your color grading game to the next level. The DaVinci Resolve Master Tips course is uncovering the nitty-gritty technical details and insider knowledge, and will take your color grading game to new heights. You will learn techniques, shortcuts, little-known tricks and strategies that will empower you to work more efficiently, inspire, and give you more freedom to focus on your creative expression. The course is designed for intermediate to advanced users who possess a strong foundation in Resolve, and is particularly valuable for colorists working on feature films and TV dramas as it offers tailored insights to meet their specific needs. Don’t miss out on this opportunity to push the boundaries of your craft on your journey towards mastering the art of color grading. About the instructor The instructor is Dylan R. Hopkin, a renowned senior colorist from one of Europe’s leading post-production houses – Nordisk Film Shortcut Oslo. Hopkin brings a wealth of industry expertise to the table, boasting an impressive resume of feature films and TV dramas. Additionally, he brings extensive experience as an instructor, having taught color grading at advanced levels in several prestigious international film schools. Hopkin is a Certified DaVinci Resolve Trainer. Course content Lesson 01: Stay Structured Lesson 02: Smart Navigation Lesson 03: Complex Versioning Lesson 04: The Viewer Lesson 05: Still Hack Lesson 06: Tracking data and attributes Lesson 07: Essential nodes Lesson 08: Complex Key Setup Lesson 09: Miscellaneous tools Lesson 10: Post Group Tools

-

Dylan R. Hopkin is a renowned senior colorist working at one of Europes's leading post-production facilities - Nordisk Film Shortcut Oslo. He brings a wealth of color grading expertise to the table from numerous features films and TV dramas. In this masterclass he will focus on the "why's" of color grading, and discuss the artistic decisions behind shots and scenes. It will answer questions like where to place the highlight levels, how to avoid "fake" skin tones, decisions behind hues of elements in the scenes and how saturated images should be. About the instructor The instructor is Dylan R. Hopkin, is one of Scandinavias most sought after colorists and has colored an impressive list of European feature films and TV dramas. Additionally, he brings extensive experience as a color grading instructors as he has taught grading in several prestigious international film schoold and is a certified DaVinci Resolve trainer. Who is this course designed for? Colorists at all levels The Gradelab plugin mentioned (but not used) in the courses can be purchased at Ravengrade.com.

- 17 comments

-

- 17

-

-

-

-

W elcome to our online Avid Media Composer Assistant Editor Training, led by renowned industry expert Kevin P. McAuliffe. If you're an aspiring assistant editor, or a filmmaker looking to strengthen your post-production skills this training is tailor-made for you. In this comprehensive course, we'll equip you with the necessary knowledge and techniques to excel as an assistant editor using Avid Media Composer. The training covers all essential aspects of assistant work, with a special focus on conform tasks, challenges and obstacles, and seamless round tripping with DaVinci Resolve. About the instructor Kevin P. McAuliffe is a renowned expert in post-production, specializing in Avid Media Composer and Davinci Resolve. With extensive experience, he's a sought-after instructor known for his clear teaching style and practical insight. Kevin is not only an instructor but also a working professional in industry forums, an active participant on conferences and events. Kevin's engagement with the larger editing community allows him to stay connected and continuously refine his understanding of the evolving editing landscape. Who is this course designed for? Avid Editors Aspiring Assistant Editors Post-production artists Lessons overview 01: Source settings and link setups 02: Frameflex and transcoding 03: Decompose, relinking and final transcoding 04: Grade before the LUT and utilizing camera frame rates 05: Creating dailies in DaVinci Resolve and Avid round-tripping 06: Various frame rates when round-tripping 07: List tool and creating spreadsheets with timeline information

-

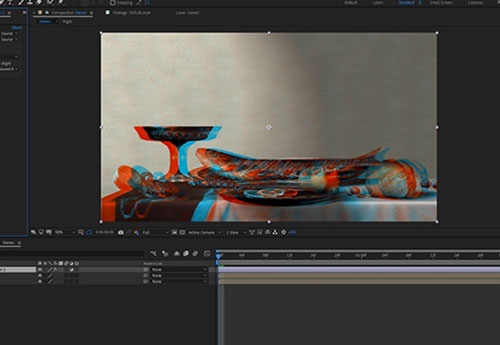

My interest in Stereoscopic imaging started in 2006. One of my close friends, Trevor Enoch, showed me a stereo-graph that was taken of him while out at Burning Man. I was blown away and immediately hooked. I spent the next four years experimenting with techniques to create the best, most comfortable, and immersive 3D I could. In 2007, I worked on Hannah Montana and Miley Cyrus: Best of Both Worlds Concert directed by Bruce Hendricks and shot by cameras provided by Pace. Jim Cameron and Vince Pace were already developing the capture systems for the first “Avatar” film. The challenge was that a software package had yet to be created to post stereo footage. To work around this limitation, Bill Schultz and I slaved two Quantel IQ machines to a Bufbox to control the two color correctors simultaneously. This solution was totally inelegant but it was enough to award us the job from Disney. Later during the production, Quantel came out with stereo support eliminating the need to color each eye on independent machines. We did what we had to in those early days. When I look back at that film, there is a lot that I would do differently now. It was truly the wild west of 3D post and we were writing the rules (and the code for the software) as we went. Over the next few pages I’m going to layout some basics of 3D stereo imaging. The goal is to have a working understanding of the process and technical jargon by the end. Hopefully I can help some other post professionals avoid a lot of the pitfalls and mistakes I made as we blazed the trail all those years ago. Camera 1, Camera 2 Stereopsis is the term that describes how we collect depth information from our surroundings using our sight. Most everyone is familiar with stereo sound; when two separate audio tracks are played simultaneously out of two different speakers. We can take that information in using both of our ears (binaural hearing) and create a reasonable approximation from the direction of where that sound is coming from in space. This approximation is calculated by the offset in time of the sound hitting one ear vs the other. Stereoscopic vision works much in the same way. Our eyes have a point of interest. When that point of interest is very far away our eyes are parallel to one another. As we focus on objects that are closer to us, our eyes converge. Do this simple experiment right now. Hold up your finger as far away from your face as you can. Now slowly bring that finger towards your nose, noting the angle of your eyes as you get closer to your face. Once your finger is about 3 inches away from your face, alternately close one eye and then the other. Notice the view as you alternate between your eyes, camera 1, camera 2, camera 1, camera 2. Your finger moves position from left to right. You also see “around” your finger more in one eye vs the other. This offset between your two eyes is how your brain makes sense of the 3D world around you. To capture this depth for films we need to recreate this system by utilizing two cameras roughly the same distance as your eyes. Camera Rigs The average interpupillary distance is 64mm. Since most feature grade cinema cameras are rather large, special rigs for aligning them together need to be employed. Side by side rigs are an option when your cameras are small, but when they are not you need to use a beam splitter configuration. Beam splitter rig in an "over" configuration. Essentially, a beam splitter rig uses a half silvered mirror to “split” the view into two. This allows the cameras to shoot at a much closer inter-axial distance than they would otherwise be able to using a parallel side by side rig. Both of these capture systems are for the practical shooting of 3D films. Image comes in from position 1. Passes through to camera at position 2. It is also reflected to the camera at position 3. You will need to flip it in post since the image is mirrored. Fortunately or unfortunately most 3D films today use a technique called Stereo Conversion, which is the process of transforming 2D ("flat") film to a 3D form. Conversion There are three main techniques for Stereo Conversion. Roto and Shift In this technique, characters and objects in the frame are roto’d out and placed in a 3D composite in virtual space. The scene is then re-photographed using a pair of virtual cameras. The down side to this is that the layers often lack volume and the overall effect feels like a grade school diorama. Projection For this method, the 2D shot is modeled in 3D space. Then, the original 2D video is projected onto the 3D models and re-photographed using a pair of virtual cameras. This yields very convincing stereo and looks great, but can be expensive to generate the assets needed to create complex scenes. Virtual World Stupid name, but I can’t really think of anything better. In this technique, scenes are created entirely in 3D programs like Maya or 3DS Max. As this is how most high end VFX are created for larger films, some of this work is already done. This is the best way to “create” stereo images since the volumes, depth and occlusions are mimicking the real world. The downside to this is that if your 2D VFX shot took a week to render in all of its ray traced glory, your extra “eye” will take the same. Cartesian Plane No matter how you acquire your stereo images, eventually you are going to take them into post production. In Post, I make sure the eyes are balanced for color between one another. I also “set depth” for comfort and to creatively promote the narrative. In order to set depth we will have to offset one eye against the other. Objects in space gain their depth from the relative offset in the other eye/view. In order to have a consistent language, we speak in number of pixels offset to describe this depth. When we discuss 2D images we use pixel values that are parallel with the screen. A given cordinate pair locates the pixel along the screens surface. Once we add the 3rd axis we need to think of a Cartesian plane laying down perpendicular to the screen. Positive numbers are receding away from the viewer into the screen. Negative numbers come off the screen towards the viewer. The two views are combined for the viewing system. The three major systems are Dolby, RealD, and Expand. There are others, but these are the most prevalent in theatrical exhibition. In Post we control the relative offset between the two views using a “HIT” or horizontal image transform. A very complicated way for saying we move one eye right or left along the X axis The value of the offset dictates where in space the object will appear. This rectangle is traveling from +3 pixels offset to -6 pixels offset. Often we will apply this move symmetrically to both eyes. In other words to achieve a -6 pixels offset, we may move both views -3 instead of one view moving -6. Using this offset we can begin to move comped elements or the entire “world” in Z space. This is called depth grading. Much like color, our goal is to try and make the picture feel consistent without big jumps in depth. Too many large jumps can cause eye strain and headaches. My first rule of depth grading is “do no harm.” Pain should be avoided at all costs. However, there is another aspect of depth grading beyond the technical side. Often we use depth to promote the narrative. For example, you may pull action forward to be more immersed in the chaos, or you can play quiet drama scenes at screen plane so that you don’t take away from performance. Establishing shots are best played deep for a sense of scale. Now all of these examples are just suggestions and not rules. Just my approach. Once you know the rules, you are allowed to break them as long as it’s motivated by what’s on screen. I remember one particular shot in Jackass 3D where Bam gets his junk whacked. I pop’ed the offset towards the audience just for that frame. I doubt anybody noticed other then a select circle of 3D nerds (I’m looking at you Captain 3D) but I felt it was effective to make the pain on screen “felt” by the viewer. Floating Windows Floating Windows are another tool that we have at our disposal while working on the depth grade. When we “float the window” what we are actually doing is controlling the proscenium in depth just like we were moving the “world” while depth grading. Much like depth offsets, floating windows can be used for technical and creative reasons. Firstly, they are most commonly used for edge violations. An edge violation is where there is an object that is “in front” of the screen in Z space, but is being occluded by the screen. Now our brains are smarter than our eyeballs and kick into over-ride mode. The edge of the broken picture feels uncomfortable and all sense of depth is lost. What we do to fix this situation is to move the edge of the screen forward into the theater using a negative offset. This floats the “window” we are looking through in front of the offending object and our eyes and brain are happy again. We achieve a floating window through a crop or by using the software’s “window” tool. Another use for controlling the depth of the proscenium is to creatively enhance the perceived depth. Often, you need to keep a shot at a certain depth due to what is on either side of the cut but creatively want it to feel more forward. A great work around is to keep your subject at the depth that feels comfortable to the surrounding shots and move the “screen” back into positive space. This can have the effect of feeling as if the subject is in negative space without actually having to place them there. Conversely you can float the window into negative space on both sides to create the feeling of distance even if your character or scene is at screen plane with a zero offset. The fish will have the feeling of being off screen even though it’s behind. Stereo Color Grading Stereo color grading is an additional step, when compared to standard 2D finishing, which needs to be accomplished after the depth grade is complete. It is much more challenging to match color from one eye to another on native shot 3D footage. Reflections or flares may appear in one and not the other. We call this retinal conflict. One fix for such problems is to the steal the “clean” information from one eye and comp it over the offending one paying mind to offset for the correct depth. Additionally, any shapes that were used in the 2D grade will have to be offset for depth. Most professional color grading software has automated ways to do this. In rare instances, an overall color correction is not enough to balance the eyes. When this occurs, you may need a localized block based color match like the one found in the Foundry’s Ocula plugin for Nuke. Typically a 4.5FL and a 7FL master are created with different trim values. In recent years, a 14FL version is also created for stereo laser projection and Dolby’s HDR projector. In most cases this is as simple as a gamma curve and a sat boost. The Future of Stereo Exibihition The future for 3D resides in even deeper immersive experiences. VR screens are becoming higher in resolution and, paired with accelerometers, are providing a true be “there” experience. I feel that the glasses and apparatus that are required for stereo viewing also contributed to it’s falling out of vogue in recent years. I’m hopeful that new technological enhancements and a better, more easily accessible user experience will lead to another resurgence in the coming years. Ultimately, creating the most immersive content is a worthy goal. Thanks for reading and please leave a comment with any questions or differing views. They are always welcome. By John Daro

-

T he Avid DNxHR and Apple Prores codec families are designed to meet the needs of modern, streamlined post-production workflows. These days we capture source material on a variety of cameras- action cams, smart phones, drones and high-resolution cameras, and codecs makes it easy to work with any formats. With the growing demand for 4K deliveries, we need fast and reliable codecs that ensure reel-time playback while maintaining superior image quality. Both the DNxHR and ProRes families offer a variety of codecs for different compressions, data rates and file sizes. Some with just enough image information needed for editing, others for high-quality color grading and finishing, and lossless ones for mastering and archiving. Below are the full list of codecs from both families. #customers { border-collapse: collapse; width: 100%; font-family: Nunito; } #customers td, #customers th { border: 1px solid #ddd; padding: 8px; font-family: Nunito; } #customers tr:nth-child(even){background-color: #f2f2f2;} #customers tr:hover {background-color: #ddd;} #customers th { padding-top: 12px; padding-bottom: 12px; text-align: left; background-color: #ad00ff; font-family: Nunito; color: white; } Codec Color sampling Usage DNxHR 444 4:4:4 Finishing DNxHR HQX 4:2:2 Finishing DNxHR HQ 4:2:2 Mezzanine* DNxHR SQ 4:2:2 SQ Editorial DNxHR LB 4:2:2 LQ Editorial ProRes 4444 XQ 4:4:4 Finishing ProRes 4444 4:4:4 Finishing ProRes 422 HQ 4:2:2 Mezzanine* ProRes 422 4:2:2 Mezzanine* ProRes 422 LT 4:2:2 SQ Editorial ProRes 422 Proxy 4:2:2 LQ Editorial * In this case, Mezzanine means a compressed file that can be used to produce additional compressed files, but it is not necessarily useful for finishing work. Codec facts: DNxHR 444, ProRes 4444 and ProRes 4444 QC are the only codecs with embedded alpha channels. DNxHR 444 and ProRes 4444 XQ are the only codecs that fully preserve the details needed in HDR- (high-dynamic-range) imagery. Both codec families are resolution independent, but bitrate will vary depending on if you output a proxy file or a higher resolution file. Both codec families can be wrapped inside MXF or MOV containers. For more detailed specifications: Full DNxHR codec list Full ProRes codec list Codec differences DNxHR and ProRes was optimized to be visually lossless through many generations of decoding and re-encoding. Some claim to have noticed performance differences, but studies have shown that the quality and speed differences are negligible. An important difference, however, is that some of the major editing and finishing systems available lacks support for ProRes encoding for Windows. This means Windows users can read a ProRes encoded file, but in some cases cannot export one. For this reason, many post-production facilites have abandoned ProRes and implemented a full DNxHR workflow. There are systems that Apple fully supports such as the Adobe programs, Nuke and Scratch, but DNxHR is accessible universally. Another important reason for the success of DNxHR is that Avid can read the files natively from its own MXF file structure. This eliminates the need to import clips and timeline rendering. Lowepost

-

C DL stands for “Color Decision List”. It is a metadata format developed by the The American Society of Cinematographers (ASC), in order to exchange rudimentary colour correction information between postproduction tools. It is sometimes necessary to apply non-destructive look communication instead of colour correcting an image directly. The colour correction is expressed as metadata, and the image is transferred without any creative decisions. This greatly simplifies the versioning of looks, because simple metadata can be updated without the need of re-transferring image data. CDLs are very common in VFX workflows because the VFX artist needs both the ungraded shot and the intended look. The ungraded shot allows the artist to comp in truly linear light, and the intended look is needed to check if the individual plates still hold together after the grade is applied. Slope, Offset and Power CDL defines a parameterised function to individually modify the red, green, and blue channel of an image. In addition, CDL specifies a global saturation. The three tone curve parameters are Slope, Offset and Power. These parameters are simple mathematical operations, which allow the colourist to modify the incoming data. “Slope” is a multiplier to the incoming data “Offset” is a summation to the incoming data “Power” is a power function to the incoming data The formula is: out.rgb=(in.rgb*Slope.rgb+Offset.rgb)^Power.rgb in.rgb = input red, green, blue values out.rgb = output red, green, blue values Slope.rgb = Slope red, green, blue values Offset.rgb = Offset red, green, blue values Power.rgb = Power red, green, blue values A fourth parameter “Saturation” is achieved by converting the out.rgb data in a Luma and Chroma component. The Chroma Signal is then multiplied by the “Saturation” parameter. Film Grade and Video Grade With Slope and Offset you can produce both a Film Grade “Exposure” and “Contrast” and a Video Grade “Lift” and “Gain”. Exposure is achieved by Offset Contrast is achieved by a combination of Offset and Slope Gain is achieved by Slope Lift is achieved by a combination of Offset and Slope Gamma is achieved by Power Formats A CDL Grade is specified by ten values, and they can be stored in different formats. Slope.red Slope.green Slope.blue Offset.red Offset.green Offset.blue Power.red Power.green Power.blue Saturation .cdl .cdl is a text file (with the suffix “cdl”). It has an xml like structure. It defines one CDL grade and looks something like: <ColorDecisionList> <ColorDecision> <ColorCorrection> <SOPNode> <Slope>0.904771 0.931037 1.011883</Slope> <Offset>0.008296 0.017804 -0.026100</Offset> <Power>1.052651 1.005324 0.945201</Power> </SOPNode> <SatNode> <Saturation>0.801050</Saturation> </SatNode> </ColorCorrection> </ColorDecision> </ColorDecisionList> .ccc .ccc is the same concept but can contain multiple CDL structures. .ale CDL values can be stored per shot in an Avid Log Exchange. .edl CDL values per shot can be stored in a CMX 3600 Edit Decision List. Colour Management Unfortunately, CDL does not define the image state and colour management pipeline in which this formula is applied. That means, these ten values describe a colour correction but do not describe the way you apply the values. The values applied in a Log Colour Space with a viewing transform on output will elicit a different result, then the same values applied in Display Space after the viewing transform. This is the reason why sometimes CDL values do not match between applications. In order to make CDL work, you need an additional Colour Management Layer that transforms the image into the correct state, apply the CDL values and eventually convert the image into another image state. In the field, there are different colour management frameworks like “OpenColor IO” or “Truelight Colour Spaces”. Some productions also just create “Lookup Tables” for the input- and output transformation and apply the CDL values by hand. Conforming The only way to automatically apply CDL values to multiple shots is using ALE and EDL. This makes CDL only applicable in very narrow workflows. .cdl or .ccc files do not store camera metadata, which makes it impossible to “bulk paste” CDL values to multiple shots. Often manual copy and paste takes place, which is a dissatisfying and time-consuming task. Some tools offer a smarter copy and paste functionality if the .cdl values are named like the filename or clip names of the shot, so the conforming metadata is stored in the filename. Big VFX Houses use their Asset Management System to deploy the CDL values to the correct shots. Conclusion CDL is a simple concept to describe and communicate a simple colour correction transformation in a software agnostic way. It does not specify or communicate the needed colour management information, nor does it store any shot based metadata. In order to make CDL work, a production needs an additional colour management and asset management pipeline to successfully distribute CDL values between software packages. Daniele Siragusano Colour and Worklow Engineer, Filmlight

-

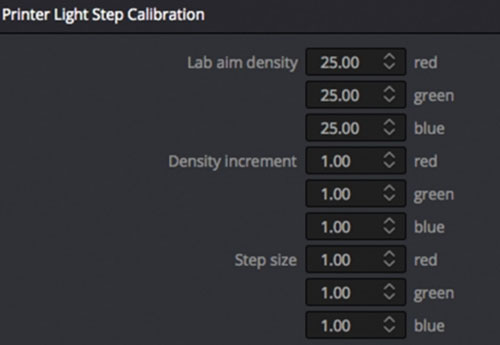

C olor timing is the process of balancing the color and density of each shot in a motion picture. This was necessary because motion pictures were filmed out of order from the final edited sequence, over a long period of time under varying conditions (lighting, exposure and film emulsions). Then the film negative was sent to the lab for film processing which also had variables which affected the color and density such as developing time, temperature and chemical balance. When the film negatives were spliced together into the final sequence to make a film print on positive film stock, it needed to be analyzed for color and density to set the printer lights for each shot so the final print would be balanced. Although the technical process of color balancing was done by the color timer, the process of reaching the final color was a collaborative effort involving the timer and filmmakers; usually the cinematographer, director, editor or other production assistants. This was done through screening film prints with the filmmakers, getting their comments and opinions then applying corrections to the film timing till final approval was achieved. This would take from 2 or 3 timing passes up to 10 or more depending on the nature of the film and demands of the filmmakers. The eyes of the color timer The tools needed to color time a film print begin with the eyes of the color timer. Before the first print is made, the film negative is viewed on a film analyzer such as a Hazeltine. This is a machine that reads the negative and presents a positive image on a video monitor. The color and density can be adjusted using knobs which represent the printer lights usually from a 1 to 50-point scale for each color (Red, Green, and Blue). The timer turns the knobs until the color and density look right so the printer lights are set for that shot. This is done for each shot of the film, then a print is made. The film print is screened by the color timer who will analyze with his eyes and make notes to what needs to be corrected. The film print is then put on a comparator projector where it can be viewed scene by scene to make color adjustments by changing the printer lights for the next corrected print. Read: Dan Muscarella about printer lights Some timers use color filters to help make color decisions. The color corrections are not seen until another print is made. Originally, these color corrections were written on a timing card which showed the footage and printing lights for each scene. The timing card was sent to a person who would have to make a paper tape with this information to be loaded into the printing machine. Now this information is input to the computer by the timer as he makes corrections and directly accessed by the printing machine. The length of time to color correct a cut negative film from the first trial to final approval can vary from a couple weeks to several months. This varies based on the number of scenes, how well it was shot, the need for reshoots or effect shots and the filmmakers demands. A typical turnaround from a first trial print to a corrected print including color correcting and printing takes several days. An average film would take 3 to 5 passes for approval which would be about 15 to 20 days total. Categories of color timers First would be the Daily Timer who would get the negatives from each day's shoot (dailies) and using a film analyzer, set printer lights for the daily prints. An Analyzer or Hazeltine Timer sets the printing lights for the edited (cut) negative to make the first print to be screened. The Screen Timer views the first print in a theater on a big screen taking notes for corrections to be made on the comparator. The Comparator Timer (usually same person as Screen Timer) views the print on a small projector and applies the corrections based on the Screen Timer's notes. With all the tools and complexities of today's digital color correction, it is easy to become too focused on micromanaging minor details and losing sight of the big picture. Film color timing was limited to color and density balance with emphasis on the overall scene to scene continuity. I would advise digital colorists to hold off using your specialty tools (secondary correction, windows, and mattes) until the overall continuity of color and density is addressed so the integrity of the cinematography does not get distorted. Now that I have been effectively retired from color timing with the closure of film labs and the industry taken over by digital projection I can only hope that the "art" of cinematography will go on. Working with some of the great cinematographers throughout my career taught me the importance of the image caught using the lighting, lenses, and techniques used by the camera. With the new technologies, it is important that this art does not get distorted by the involvement of too many opinions and people in the digital timing bay. My biggest advice is to listen to the cinematographer. Jim Passon

-

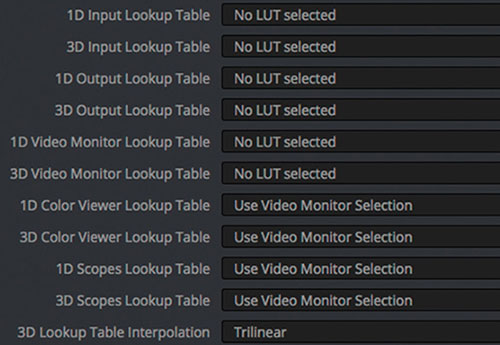

S imply put, a LUT is a Look Up Table. A LUT is used to transform a value (or a set of multiple values as in RGB) into another value or set. 1D-LUT Let’s first consider a 1D-LUT. A 1D-LUT is an array of values. For a 10-bit data system (such as the classic 10-bit Cineon Log data system), the values range from 0 to 1023, therefore, a one-dimensional array of 1024 elements can perfectly map each of the possible values into another. If the data is a 16-bit system, the array size needs to be 65536 long to accommodate all the possible input values. If for either case, the size of the array is a problem with the hardware/software, a subsampled array can be used (i.e., instead of 65536 elements for a 16-bit system, 256 can be used and the missing input values are then linearly (or another interpolation method) interpolated. On modern software systems that utilize floating point systems, i.e. OpenEXR, the data to be put through a LUT is converted to properly match the input domain of the LUT system. In modern color correction, the image is usually a three channel RGB system or some other three metric color system. One 1D-LUT can be applied to each of the three channels such as in the case of a 1D-LUT used to convert from log data to linear data. Alternatively, a separate 1D-LUT can be applied to each channel, for example, if the gain or color shift of each channel needs to change. In each case described, the channels are treated as independent, any changes in one channel does not alter any other channel. 1D-LUTs are normally used where the desired transform can adequately be described by channel independent processing (scale or RGB metric conversions, overall color changes like the ASC CDL [except for the saturation parameter]). 3D-LUT In most of the color data transformations, the three channels are dependent. Changes in one channel will alter the others. However, to use the same type of data set as a 1D-LUT, a 3-dimensional array that points to a three element set would be required. For a 10-bit system with 1024 elements, that would be an array with 1024^3 elements (1,073,741,824) each pointing to three numbers! And for a 16-bit system that would be 65536^3 (281,474,976,710,656). Clearly, these array sizes are too large to manage in today’s computers. In order to manage the system, the domain 1024, 65536 etc. is broken down into discrete intervals. There are many currently used 3D-LUT interval types used today. Some usual ones are 16^3, 17^3, 32^3, 33^3, 64^3 and 65^3. Others are possible. For a 17^3 3D-LUT in 10-bit space, each set of nodes of the three channels range from 0 to 1023 with an increment of 64. For the 65^3 3D-LUT the number of input nodes (each input point is called a node) is 65^3 (274625) which is far less than the 3D array system (281,474,976,710,656). 3D-LUTs are stored in the computer with an implied input. If one looks at a text based 3D-LUT, the numbers are the output sets and the input values are implied by the order of the list. Normally, there are two types of implied orders, the R fastest (or commonly referred to as inside) and the B fastest (most common). In order to use these 3D-LUTs, a 3D interpolation method is employed. Common ones are trilinear and tetrahedral interpolation which uses the input nodes and then linearly (occasionally other types such as cubic interpolation etc.) interpolated to obtain the output color R’G’B’ from the input RGB. Less commonly used 3D-LUT types do not have ordered nodes and are lists of input RGB to output R’G’B’ and are called cloud based systems which have their own types of interpolation methods. For these systems, the interpolation times are usually longer due to the increased processing involved. Types of 3D-LUTs Digital camera input LUT Used as a color corrector input LUT to transform the camera R G B into some desired standard or creative look. Calibration LUT One type is the film emulation LUT. These types of LUTs are derived from film negative and film print densitometry of discrete patches originating from a film recorder and a film print. These LUTs “calibrate” the display in the color correction room such that the image on the screen is a close match to a print viewed on a film projector. Post houses without their own film projectors can assure proper film out results with a quality 3D-LUT. It is possible to achieve the same look without using a LUT as long as the color correction hardware and software has the capability to process the images from the starting position to the desired position. And, of course, as long as the desired position’s look is known to the colorist. In the case of the film emulation LUT, it is probably very error prone and/or time consuming to have a colorist try to match a print on a film projector to the digital display for each scene in a consistent manner. Creative LUT Many times a creative look is desired and a specially created 3D-LUT can assure that the look is applied consistently on varied scenes. The colorist can then concentrate on minor changes from this base look provided by the LUT. Some examples are LUTs derived from older cinema systems such as Technicolor two-strip or bleach bypass etc. The output of these LUTs can then be altered by the colorist for the desired effect. I have created LUTs that desaturate all colors except reds and maintain the hue and saturation of flesh. This is a very specific LUT to create a starting point for the colorist. In some cases, the look desired by the production team stretches the ability of the controls on the color correction platform. A properly created 3D-LUT can provide the look while the color correction platform has its controls in the center positions, allowing for further creative control. Technical LUT Sometimes a 3D-LUT is used to transform a project from one type of display to another. Some examples are: P3 to Rec-709 2D P3 to 3D P3 D6500K P3 to D5500 P3 Rec-709 to P3 Transform standard display (i.e., P3) to a non-standard type such as Plasma, OLED or Laser display Technical LUTs may be provided by the camera manufacturer for certain purposes. For example, a LUT may be provided to transform the camera data to ACES data for ingest into the color corrector. Post house engineering staff, color scientist, can create specific technical LUTs for a variety of purposes. P3 to Cineon DPX LUT Some post houses use as their workflow a digital cinema P3 data-centric system. This is due to the deliverable to the production is mostly for digital cinema venues and they do not want to limit the image to a film print. Other display types that are common, such as Rec-709 can be created from the P3 data (with some mild gamut remapping of the larger color P3 system into Rec-709). In these cases, it is desired to use a 3D-LUT to transform the P3 data into Cineon 10-bit DPX data for film out if a small number of film prints are requested by the production. The generation of an accurate 3D-LUT for this purpose is very complex. The P3 data can be out of the film print’s color gamut and needs to be gently remapped. I have developed a sophisticated computer model to create these types of LUTs. The film out results is exceptional. Viewing LUT (Show LUT) The Viewing LUT is what the colorist uses in the normal viewing of the digital data in the suite. It depends on what the data metric of the source is. If it is a digital camera, then the LUT will convert it to P3 directly, or if the camera LUT is a camera to Cineon DPX LUT then that LUT can be combined with the Calibration LUT (which converts Cineon DPX to P3) to get the Viewing LUT. So, the Viewing LUT is whatever LUT that can take the source data metric and convert it to the metric used for the digital projector (usually normal P3 but can be Rec-709, P3 @ 6000K white point or P3 @ 6500K white point etc.). Float conversion LUT The Float conversion LUT can be useful, however very few LUT interpolation algorithms can effectively work with the implied data range of EXR. For example, a normal Cineon DPX 0-1023 log data set is roughly to -0.05 to 13.5 in linear EXR. But, the "usable" EXR range for display is 0 - 1.0. Which means that the LUT must convert the EXR range into a viewable range. Commonly, since the LUTs can only handle an implied range of 0 - 1.0, the data is manipulated by the colorist to take the wider EXR range and artistically compress it into the domain the LUT can handle. One problem with the EXR metric is that it is normally linear. Linear data going through a LUT is not efficient. For an EXR linear range of -0.05 to 13.5, that is a 14.0 range and if the displayable range is 0 - 1.0 that is only ~7% (1.0/14.0 = 0.0714). That means that for the size of the LUT in nodes (33^3, 65^3 etc.) only 7% is used and interpolation artifacts can show up. For a 33^3 LUT, there are 33^3 = 35937 nodes and 7% is only 2567 nodes used, the rest are not used. Schemes such as introducing a gamma or log on the data can effectively compress it for LUT conversion, but it takes some color expertise to create the LUT. On most modern color correction platforms when they use the LUT conversion, the system re-scales the data to 0 - 1.0 LUT input to interpolate through the LUT. Mathematically other domains are possible, but typically all positive values are used. Inverting a LUT Whether the LUT used is a 1D-LUT or a 3D-LUT, the process of going backwards is possible but plagued with some problems. If the transform going forward through the LUT gives the same output for multiple inputs, then it is not possible to “guess” which of the inputs produced the output. Further, the output domain of the LUT may be different from the input domain. For example, it is common to want the RGB triad that gives a specific XYZ on a display. Going forward from RGB to XYZ is relatively simple since we can pass a set of patches on a display and measure the XYZ values and create a 3D-LUT. However, the domain of the XYZ is limited by the gamut of the RGB primaries and white point of the display. The inverse 3D-LUT needs to accommodate the full XYZ range possible and complex gamut mapping techniques need to be applied to be able to produce an operationally correct 3D-LUT. Even with these techniques, proper statistically sound results are achieved that may not be entirely accurate. There are certain LUTs that cannot be inverted. For example, a LUT that creates Black and White (or some tone like sepia) from a color image. A color image cannot be derived from a single black and white image. The creation of LUTs Many techniques are used to create LUTs. 1D-LUTs and 3D-LUTs may be created by applying a mathematical transform to a unity LUT. In the case of a 1D-LUT, a unity LUT is an array where the values are the indices (for example, a unity 1D-LUT give 233 output for a 233 input etc.). A unity 3D-LUT has the output R G B set equal to the input RGB. Some examples of LUTs that are created mathematically are Rec-709 to linear, linear to sRGB and gamma (2.6 gamma for digital cinema) to linear XYZ. LUTs that are created from non-equation systems are from measured data derived from actual color patches (either on film or on measurements from values sent to a display device) or very complex system computer models. For the systems from measured data derived from actual color patches, usually only a small portion of the total color space of the LUT is measured and the nodes not measured are interpolated via conventional techniques. Systems like Truelight (from Filmlight) utilize a bit over 1200 film patches (via an auto-reading densitometer) and where the measurements are done from displays many more patches can be measured depending on the characteristics of the measuring device. A larger number of patches usually reduces error in the created 3D-LUT. Certain 3D-LUTs, i.e. P3 to Cineon DPX LUT, benefit from large patch sets. Typically, I use 9000-10000 film patches to create these 3D-LUTs. If less than the actual number of nodes in the 3D-LUT is measured, some error is introduced by the interpolation method. Mitch Bogdanowicz

-

A change that solves the issue has been implemented Monday night and the website should now be available for most of our clients. Please do not be alarmed if it doesn’t work yet for you – The solution is implemented but it might take a bit of time for it to take effect. We will of course continue to supply assistance wherever required.

-

Thanks for the feedback Brandon, completely my mistake. It's fixed now 🙂

-

We continue our DaVinci Resolve 17 training with a brand new high-end course in Color Management Workflow. This is an intermediate course for colorists and visual effects artists taught by our master instructor Lee Lanier. In this training series you will learn to work with both display-referred and scene-referred management including ACES, applying DRTs with SDR and HDR projects, using DaVinci Wide Gamut, matching camera profiles with color space transforms, DCTLs and Cinematch, how to set up your projects for multiple color space outputs, RED IPP2 and RAW workflow, how to use the Gamut tool, LUTs, CSTs and OpenColorIO in Fusion, an overview of the Colorspace Aware Tools and much more. The DaVinci Resolve project files and footage are available for download so that you can easily follow along. Download project files The DCTL that comes with the project files is created with a tool called Resolve Math Extra (OFX) developed by Paul Dore. It can be downloaded from this site. About the instructor Lee Lanier has created visual effects on numerous features films for Walt Disney Studios and PDI/DreamWorks. Lee is a world-renowned expert in the video effects field, and has written several popular high-end software books, and taught at the Gnomon School of Visual Effects in Hollywood. Who is this course designed for? Colorists Visual effects artists Lessons overview L01: Introduction L02: Color terminology and workflow overview L03: Display referred vs scene referred L04: Display referred space and LUTs L05: Adding LUTs and DCTLs to resolve L06: Matching cameras with an OFX plug-in L07: Mixing camera footage in display referred L08: Color management in Fusion L09: Resolve color management L10: Working with ACES L11: RCM and ACES with Fusion L12: Adding color transformations in Fusion L13: Using opencolorIO L14: Matching cameras with Cinematch L15: Switching the RCM wide gammut L16: Setting up HDR in RCM and ACES L17: Using color scopes with SDR and HDR L18: Working with colorspace aware tools and HDR grade Software required A free version of DaVinci Resolve or DaVinci Resolve studio

-

When the Red Komodo 6K camera hit the market with its exceptional image quality, global shutter and small versatile form factor for an affordable price, it took the industry by storm. The modular design of the camera empowers the users to customize it for any production environment, and we’ll take a look at some of the accessories available. Camera cage A camera cage provides protective armor and several mounting points for mounting accessories. It allows you to connect different battery solutions, monitors, microphones, handle options, and attach the camera to tripods and gimbals. This cage is is probably the most popular one, from Tilta. Other popular cage providers for Red Komodo are Smallrig, Shape, 8Sinn, Nitze, Kondor Blue and the more expensive Wooden Camera and Bright Tangerine. Monitor The small screen on the top of the Red Komodo camera body is not a perfect option for shooting, so adding a traditional 5” to 7” monitor is a better option. That will also let you control many settings via the touchscreen like white balance, shutter angle and even more advanced features like aperture changes of electronic lenses and autofocus point selection. Popular camera monitors to check out are Atomos Ninja V 5”, SmallHD Pro Focus 5” and the Portkeys 5” BM series. In the 7-inch monitor range, have a look at Atomos Shinobi. For an even larger screen, the 9” BM090 from Flanders Scientific is worth checking out. It’s a camera version of FSI’s high-quality broadcast and color grading monitors, full of useful features. Handles The most popular side handle for Red Komodo is their own low profile Red Outrigger handle with integrated Record Start/Stop button. Red also offer their Wing Grip top handle, but there are many third part alternatives to choose from, from the same companies that create cages for the camera. Some top handles like this one from Smallrig have mounting holes for a tilt monitor mount in front which can be important for center aligning the monitor for better balance. There are also handles like this one from Smallrig that can slide in both directions or swap 180 degrees for situations that require a different grip. A popular, but more expensive one is this from Wooden Camera. Battery System The Red Komodo body can take two small Canon batteries (BP-955 or BP-975), their own Redvolt BP or cheaper Red Approved alternatives from Blue Shape or Jupio. There are also some Red Compatible (not Red Approved) alternatives that provide enough current to power the camera and have been tested and deemed safe for use. Just remember that these batteries do not communicate with the camera and therefore are unable to display battery percentage and remaining time. For more power, consider adding a V-mount plate and larger V-mount batteries. Audio Most cinema cameras are not designed for high-quality audio recording and requires an external audio adapter. The internal audio comes with excessive amounts of hiss when it’s gained up, and the adapter is needed to get a clean audio signal. When using the adapter, the camera preamps can stay on low gain for best performance while the adapter does all the heavy lifting. That way we can push the gain and get high-quality audio. The most popular one is RED-DXA from Beachtek. It’s phantom-powered dual XLR adapter with low-noise preamplifiers. It gives you everything you need to record cinema quality audio. It can be mounted on the rods with rail blocks, or installed on the side of the cage with the click-release plate that comes with the adapter. It’s designed for RED and the industrial design look makes it integrate nicely with the look of the camera body. While talking audio, our favorite microphone is the MKH 8060 from Sennheiser. It’s the successor of the legendary MKH 416 and a state-of-the-art shotgun microphone. Technically speaking, the MKH 8060 main difference is that its off-axis sounds is not affected by any coloring and it has a lower end, which is often asked for by filmmakers. It’s also a bit shorter and therefore ideal for a small camera like the Komodo. To mount the microphone, this microphone holder can be mounted directly on every cage. Lens Equipment, follow focus and matte box A Red System often consists of a base plate and 15mm rods that can be attached to the camera cage. The rods can be used with a lens support or to attach a Follow focus system. We recommend the Mini Follow Focus from Tilta as that’s super small, rock solid and the most affordable option on the market. If you choose to use non-cine lenses on the Komodo, follow focus gear rings are needed. Non-cine lenses do not have built-in 0.8 module gearing for use with follow focus systems, so that has to be purchased separately. Luckily, Outlast Cinema Gears can be ordered super cheap from Couto Camera for both the focus and aperture barrels. They have gear rings for every lens available at any size and ships quickly worldwide. It’s important to know that ultra-rigid gears in aluminium makes noise during pulls, but the ones from Couto are near-silent which is important when having a shotgun microphone mounted on the camera. For a small camera using non-cinema lenses, a lightweight mattebox is preferred. Tilta is offering the smallest solution on the market which is designed to be mounted directly onto the lens. Media Management The Komodo takes standard CFast 2.0 cards, and several producers offer RED Approved Media. Angelbird and Exascend are trusted by the industry and offer the highest sustained performance (read and write speed) throughout the capasity of their cards. That means you can record without dropped frames in all cinematic modes. Angelbird and Exascend are also the only ones who offer approved media up to a capacity of 1TB, and they cards are built with magnetic, x-ray, electric discharge, overload and temperature protection. To take advantage of the incredible dynamic range in the camera and achieve superior image quality with the right post-production process, watch this training series from Lowepost. The RED Post Production training is designed for beginners and no post-production experience is necessary. Additional accessories There are tons of additional accessories available for Red Komodo including RF to PL mounts, I/O modules, filter mounts, cables, and small cable management devices. New accessories hits the market every month and you will find what you need to build your ultimate camera. Happy building! This post might include affiliate agreements, for full information click here.