Beginners Guide to Stereoscopic Understanding

John Daro

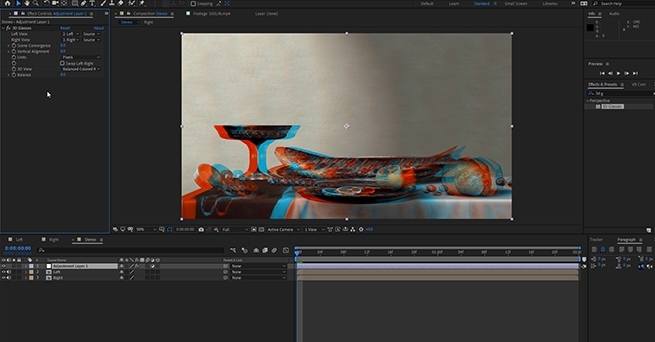

My interest in Stereoscopic imaging started in 2006. One of my close friends, Trevor Enoch, showed me a stereo-graph that was taken of him while out at Burning Man. I was blown away and immediately hooked. I spent the next four years experimenting with techniques to create the best, most comfortable, and immersive 3D I could. In 2007, I worked on Hannah Montana and Miley Cyrus: Best of Both Worlds Concert directed by Bruce Hendricks and shot by cameras provided by Pace. Jim Cameron and Vince Pace were already developing the capture systems for the first “Avatar” film. The challenge was that a software package had yet to be created to post stereo footage. To work around this limitation, Bill Schultz and I slaved two Quantel IQ machines to a Bufbox to control the two color correctors simultaneously. This solution was totally inelegant but it was enough to award us the job from Disney. Later during the production, Quantel came out with stereo support eliminating the need to color each eye on independent machines.

We did what we had to in those early days. When I look back at that film, there is a lot that I would do differently now. It was truly the wild west of 3D post and we were writing the rules (and the code for the software) as we went. Over the next few pages I’m going to layout some basics of 3D stereo imaging. The goal is to have a working understanding of the process and technical jargon by the end. Hopefully I can help some other post professionals avoid a lot of the pitfalls and mistakes I made as we blazed the trail all those years ago.

Camera 1, Camera 2

Stereopsis is the term that describes how we collect depth information from our surroundings using our sight. Most everyone is familiar with stereo sound; when two separate audio tracks are played simultaneously out of two different speakers. We can take that information in using both of our ears (binaural hearing) and create a reasonable approximation from the direction of where that sound is coming from in space. This approximation is calculated by the offset in time of the sound hitting one ear vs the other.

Stereoscopic vision works much in the same way. Our eyes have a point of interest. When that point of interest is very far away our eyes are parallel to one another. As we focus on objects that are closer to us, our eyes converge. Do this simple experiment right now. Hold up your finger as far away from your face as you can. Now slowly bring that finger towards your nose, noting the angle of your eyes as you get closer to your face. Once your finger is about 3 inches away from your face, alternately close one eye and then the other. Notice the view as you alternate between your eyes, camera 1, camera 2, camera 1, camera 2. Your finger moves position from left to right. You also see “around” your finger more in one eye vs the other. This offset between your two eyes is how your brain makes sense of the 3D world around you. To capture this depth for films we need to recreate this system by utilizing two cameras roughly the same distance as your eyes.

Camera Rigs

The average interpupillary distance is 64mm. Since most feature grade cinema cameras are rather large, special rigs for aligning them together need to be employed. Side by side rigs are an option when your cameras are small, but when they are not you need to use a beam splitter configuration.

Beam splitter rig in an "over" configuration.

Essentially, a beam splitter rig uses a half silvered mirror to “split” the view into two. This allows the cameras to shoot at a much closer inter-axial distance than they would otherwise be able to using a parallel side by side rig. Both of these capture systems are for the practical shooting of 3D films.

Get access

This was a short excerpt, become a premium member to access the full article.

-

1

1

Recommended Comments

Join the conversation

You can post now and register later. If you have an account, sign in now to post with your account.

Note: Your post will require moderator approval before it will be visible.