MichaelTiemann

Premium-

Posts

16 -

Joined

-

Last visited

Content Type

Profiles

Case studies - Free

Case studies - Premium

Resources

Insider

Courses

Forums

Store

Everything posted by MichaelTiemann

-

Professional Color Grading Techniques in DaVinci Resolve

MichaelTiemann commented on Lowepost's course in The Art of Color Grading

What you are seeing in this image is the result of cinematic LIGHTING first and foremost, grading and correction secondarily. Notice how the men on other side block the practical lights from shining too much into the camera, but allowing lots of beautiful side-lighting to fall on the leg. The light on the woman's face and shoulders is much easier to conceal by placing it out of the shot altogether. Never underestimate the power of getting the image you want by starting with the light that hits the sensor... -

Professional Color Grading Techniques in DaVinci Resolve

MichaelTiemann commented on Lowepost's course in The Art of Color Grading

Another way to say the same thing: Color Correction is distinct from Color Grading in that Color Correction is about hitting certain normal targets (balanced whites, proper black levels, white levels, and midtones) and Grading is about making creative choices (intention color cast, lifted/colored blacks, altered/non-linear contrast curves, altered/quantized hues, saturation, etc). Generally speaking, "Garbage In, Garbage Out" meaning that if you have wildly divergent shots coming in to the grading process, each grade is going to be unique (and uniquely error-prone) unto itself. The more you can normalize (aka correct) your incoming clips, the more consistent you can be in applying a grade that behaves consistently in support of your story and aesthetics. And of course the grading process may result in the need for some kind of post-grade correction so that you can be sure your outputs are legal for the intended delivery format. Most LUTs (especially Log -> Gamma LUTs) presume you are feeding properly balanced and properly exposed image data, and so making corrections before applying LUTs ensures that the LUTs perform both optimally and as expected. Of course there are always opportunities to break the rules, but it is best to consciously break the rules, rather than unwittingly breaking the rules and then wandering in oblivion wondering why all the rules you learned are not working as they should. Generally speaking, corrections to get you to standard waypoints and grades should take you to creative intentions. -

Color Management Workflow in DaVinci Resolve 16

MichaelTiemann commented on Lowepost's course in legacy

I deal with this in one of two ways: 1. Create a Group for each unique RAW needed, then use Pre-Clip Group suit. Can use different LUTs on Group basis, but Pre-Clip RAW parameters don't affect Pre-Clip group and there is not a Pre-Clip option among Project, Clip, Camera Metadata 2. Use Versions, which do encapsulate RAW parameters. If you have multiple clips from same camera using same RAW interpretation, this is a winner. -

Professional Color Grading Techniques in DaVinci Resolve

MichaelTiemann commented on Lowepost's course in The Art of Color Grading

So far I have seen three major use cases: 1. When channel information becomes so imbalanced that a simple gain function will not do, for example, in underwater photography where the red channel becomes so starved that it cannot be reconstructed by simply increasing gain. In such a case, the red channel can become a mask to color as red the highly detailed luma information of the green and blue channels. 2. When it is desired to shrink a wide color gamut (containing colors all the way around the color circle) to two complementary colors (teal/orange, red/cyan, green/magenta) or perhaps three primary-ish colors. 3. Changing overall color balance without trying to adjust individual hues. It works especially well at taking out red, green, or blue (by feeding the color you want to remove to the other two channels). It really helps to have some color wheels and to just look at how a pushing the knobs in various directions change those color wheels. In DaVinci Resolve you could have an image with two color wheels. Put a power window around the second wheel. Adjust the color matrix within the power window. Annotate the transformation with a text window, and voila. For extra credit, you can put an expression into Fusion and have the coefficient value printed as text so you can automate the movement of sliders and see how the values transform the wheel. -

Color Management Workflow in DaVinci Resolve 16

MichaelTiemann commented on Lowepost's course in legacy

Thanks for the quick response. I found that I needed to connect only one level down: Project Files/Resolve Projects. "color" was the ticket I was looking for. Thanks again...gonna learn something new today! -

Color Management Workflow in DaVinci Resolve 16

MichaelTiemann commented on Lowepost's course in legacy

Can you please explain the best way to attach the project files to Resolve? I know that there's a way to connect to a database, but it requires knowing not only the location of the database, but also its name. Nowhere have I been able to find the name of your database, so even though you supply the "Resolve Projects" directory tree, I don't know how to attach to the databases you are providing. Thanks! -

Color Management Workflow in DaVinci Resolve 16

MichaelTiemann commented on Lowepost's course in legacy

Looking forward to it. Please consider covering some of the issues I surfaced here: https://forum.blackmagicdesign.com/viewtopic.php?f=33&t=114444 -

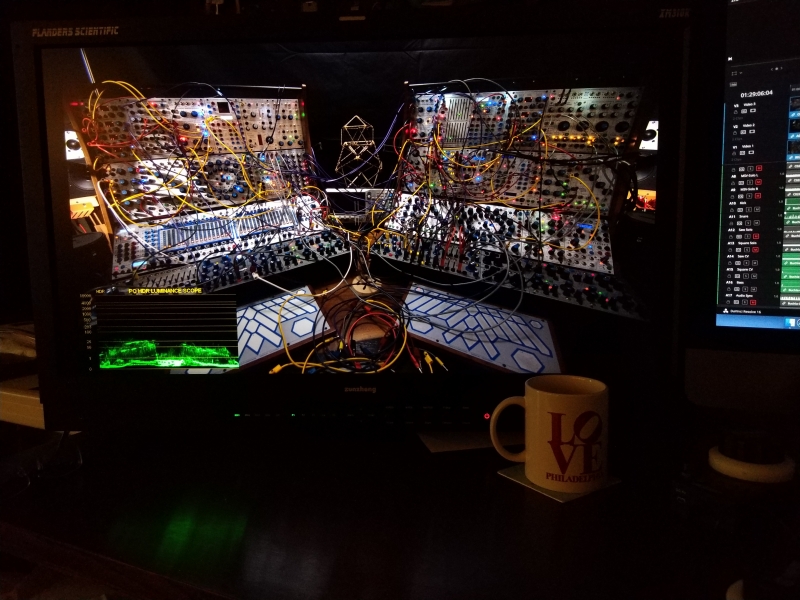

This January I made the commitment to really delve into HDR grading, and I purchased an FSI XM-310K monitor. I was wondering how I was going to find the time to really focus on this brave new world, and then shortly thereafter, I found myself in quarantine. With lots of time. I returned to my music studio to create some new content that would be fun to grade in HDR, and I composed and performed this experimental music on my Buchla modular analog synthesizer. It's been getting good reviews within the Buchla community, but I'm interested to hear your feedback on the grade--if you have an HDR monitor on which to review it. And also your thoughts on how well the SDR version holds up to scrutiny.

-

The DM-240 really shines when it comes to using all it's built-in tools. The LCD panel technology does have a certain amount of black bleed, which is why it's important to have a bias light behind it. It is not OLED (which gives virtually pureblacks) not is it light-modulated (like XM-310K zones or XM-311K pixels). But it is great at what it does.

- 1 reply

-

- 1

-

-

My plans for 2020 included getting serious about HDR grading. I've been shooting on RED cameras since 2014 and I've wanted to produce HDR since YouTube enabled it in late 2016. But there were many obstacles. No more. I bought an FSI XM-310K, which I pair with my DM-240 for DolbyVision style side-by-side HDR/SDR grading. To answer your questions: General questions Have you graded HDR? Yes. How often? Every chance I get. What type of content are you working on? Creative music projects recorded at Manifold Recording. Who for? (broadcaster, on demand streaming service etc) Music studio clients and myself. Workflow questions Are you grading HDR first or SDR first? Last year it was SDR first, and checking HDR on consumer-grade OLED. This year (and hereafter) HDR first with SDR for all HDR deliverables. Are you using a Dolby Vision workflow? Yes. If you're not using a Dolby Vision workflow are you doing a manual trim or using some automatic tone mapping? I am using DolbyVision AND doing manual trims. How much time are you given for the creation of your secondary delivery format ie 1 day for a SDR trim pass? I do SDR checks as I review HDR renders. By the time I'm finished with HDR for delivery, SDR is just playing the HDR deliverables with it's corresponding SDR LUT. Do you grade only in PQ ( even without a dolby vision workflow) or do you work in HLG? PQ only. What grading software are you using? DaVinci Resolve. I recently posted to their Feature Request forum my ideas for how to work best in 16.2.2 and am trying to open a discussion about what will make it better for the future. On what platform is the final online being carried out on ie Avid, Premiere Pro, Flame etc? DaVinci Resolve.

-

I posted this in the Resolve BMD forum, but it's been buried by hundreds of posts about stabiilty, performance, and other stuff. I'm hoping that within this community, the topic of HDR-friendly workflows is closer to top-of-mind. I'm trying to figure out the most idiomatic--but not idiotically difficult--way to export a still from a project so that its very easy for me to post or email the image to folks who will look at it on their phone or desktop PC. I can tell you that the REC 2100 PQ stills I'm exporting are absolutely NOT sharing friendly out of the box. I can think of many ways to do this, all of which are disruptive to the current state of my project. Surely there is a straightforward way to do this that doesn't involve all manner of manual triage. Right now I'm getting best results by using my camera phone (?) in manual mode to take a picture of my FSI XM-310K monitor displaying the image with a D65 whitepoint. There must be a better way!

-

Professional Color Grading Techniques in DaVinci Resolve

MichaelTiemann commented on Lowepost's course in The Art of Color Grading

Lesson 16 glosses over the fact that you turned the woman's blonde hair to ginger. -

Professional Color Grading Techniques in DaVinci Resolve

MichaelTiemann commented on Lowepost's course in The Art of Color Grading

I had the same question, so I opened up the clips, set up the node tree, and started playing. At first it appears to be somewhat magical, but it suddenly makes sense with an RGB waveform monitor (or an RGB parade scope) and a vectorscope. What I noticed is that when the Red output is fed also by the Blue channel, the vector scope show everything being pulled and stretched toward the R target. When the Green output is fed also by the Blue channel, the red-stretched blob rotates and stretches toward the Yl target. You can then watch the magic happen as you rase the Green output into the Blue Channel. What was a large outstretched limb of color pointing towards Yl squishes very quickly to a very thin line that points between R and Cy. Those two complementary colors provide a credible balanced look that simply has no other colors to it--no Mg, no B, no G, and no Yl. But that narrow balanced line is not aligned with the skin-tone line. This can be fixed by rotating the Hue, or by subtracting Red output and re-adjusting Green output to get back to the thin wispy line. When the remaining colors are aligned with the skin tone line, the skin tones look natural, and the balancing complementary colors on the other side of the center make the look. I got this with the following Red Channel: 1 R + 0.9B Green Channel: 1 G + 0.9B Blue Channel: 0.91 G + 1B Hue Rotation: 43.4 Without Hue rotation I got the lines to line up with Blue = -0.5 R + 1.41 G + 1B When I raised the Green output to 2.0 I needed to drop Red to -1.07 and I observed the need for a Hue rotation of 53.30 to get back to the skin-tone line. I also noticed that using larger numbers in the Blue output channel results in greater saturation, which may or may not be desirable. But I think that the fundamental operation we see here is using the RGB Mixer to take us from 3 colors to 2 while letting the skin tone be the skin tone. I will also say that it is INVALUABLE to have a Resolve Mini panel with which to be able to twiddle two knobs that send the colors in opposite directions so that one can maintain the target of the skin tone line while seeing how all the other colors react to various parametric alternatives! -

Professional Color Grading Techniques in DaVinci Resolve

MichaelTiemann commented on Lowepost's course in The Art of Color Grading

Aha, the key lesson I took from earlier lessons was the importance of applying exposure correction before the LUT, not with respect to other corrections. I'll think harder about that... -

Professional Color Grading Techniques in DaVinci Resolve

MichaelTiemann commented on Lowepost's course in The Art of Color Grading

In lesson 8 at 4:09 you say "Normally you'd want to dial in exposure first, as explained in earlier lessons". Because of Resolve's Order of Operations (in Chapter 109, Node Editing Basics), the Offset operation takes place before the 3D LUT, so you are, in fact, demonstrating the same approach as explained in the earlier lessons. I don't disagree that exposure correction before the LUT gives a very natural and intentional look. I'm just quibbling with the explanation.